How to Build Your First MCP Server in 30 Minutes

HANDS-ON TUTORIAL — UPDATED MARCH 2026

MCP (Model Context Protocol) is the open standard that connects AI models to your tools and data. In this tutorial, you'll build a working MCP server from scratch — one that Claude, ChatGPT, and Cursor can actually use. No prior MCP experience needed. Just Python or TypeScript and 30 minutes.

By the end of this tutorial, you'll have:

- A working MCP server with 3 custom tools

- Connected it to Claude Desktop

- Understood the MCP architecture well enough to build production servers

What Is MCP and Why Should You Care?

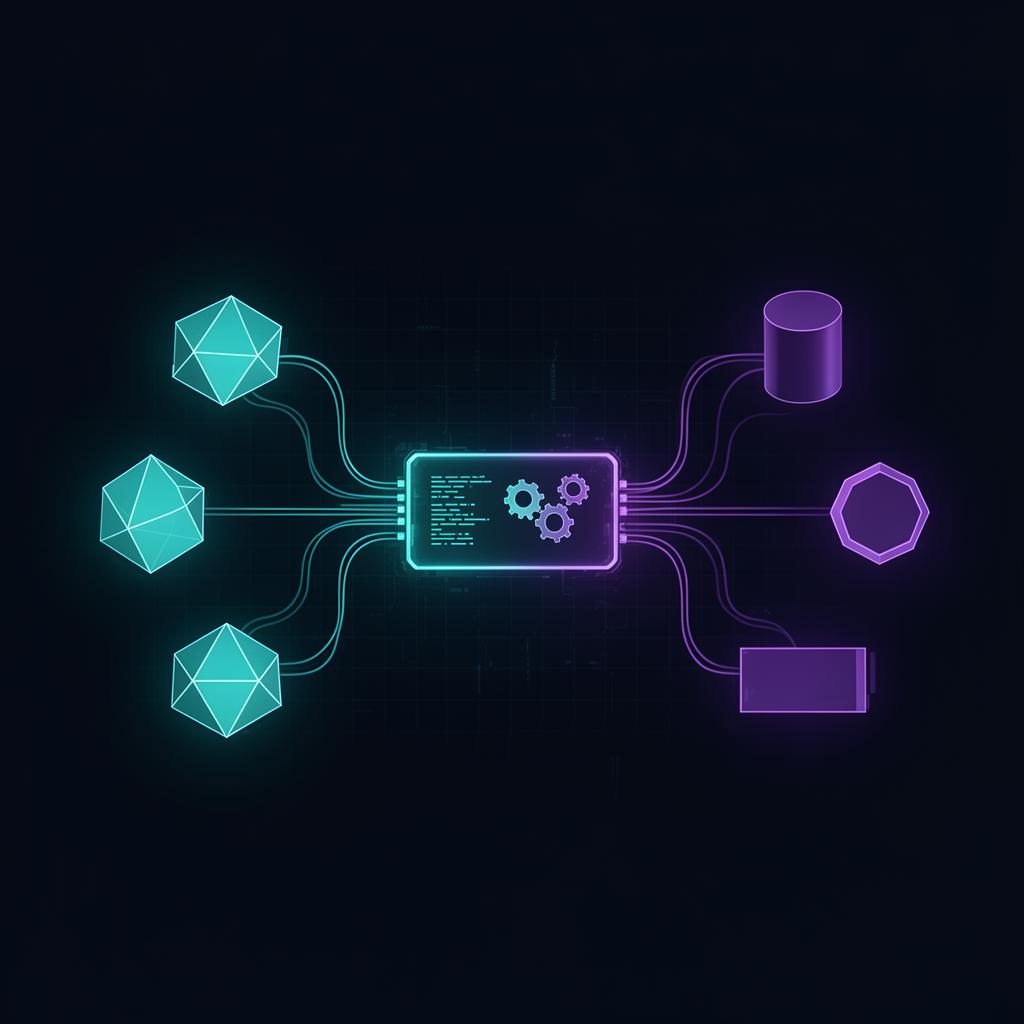

MCP (Model Context Protocol) is an open standard created by Anthropic that lets AI models connect to external tools and data sources through a unified interface. Think of it as USB-C for AI — you build one server, and every major AI assistant (Claude, ChatGPT, Gemini, Cursor) can use your tools. As of March 2026, over 10,000 MCP servers exist in the ecosystem.

Before MCP, connecting AI to your tools meant building separate integrations for each platform — one plugin for ChatGPT, a different extension for Claude, yet another for Cursor. MCP eliminates this fragmentation with a single protocol. You build once, it works everywhere.

MCP has three building blocks:

- Tools — functions the AI can call (search database, send email, create ticket)

- Resources — data the AI can read (files, database records, API responses)

- Prompts — reusable templates that guide AI behavior for specific tasks

If you want a deeper dive into the protocol itself, read our complete MCP guide. In this tutorial, we're focused on building.

What Do You Need Before Starting?

You need Python 3.10+ or Node.js 18+, a terminal, and the Claude Desktop app installed — that's the full list. No AWS account, no Docker, no complex infrastructure. We're building locally first, which is how most production MCP servers start anyway. The entire setup takes under 5 minutes.

Here's the complete prerequisites list:

- Python 3.10+ or Node.js 18+ installed on your machine

- Basic knowledge of Python or TypeScript (you don't need to be an expert)

- Claude Desktop app installed (free, download from claude.ai)

- A terminal / command line

- 30 minutes of uninterrupted time

That's it. No cloud accounts, no containers, no complex setup. We start local, and you can deploy later when you're ready.

How Do You Set Up the Project?

Setting up an MCP project requires creating a directory, installing the official SDK, and creating a single server file. The Python SDK is called mcp and the TypeScript SDK is @modelcontextprotocol/sdk. Both are maintained by Anthropic and work identically in terms of protocol support.

Python Setup

mkdir my-mcp-server

cd my-mcp-server

python -m venv venv

source venv/bin/activate # Windows: venv\Scripts\activate

pip install "mcp[cli]" httpx

touch server.py

TypeScript Setup

mkdir my-mcp-server

cd my-mcp-server

npm init -y

npm install @modelcontextprotocol/sdk zod

touch server.ts

Your project is ready. The mcp[cli] package includes the MCP Inspector tool we'll use for testing later. The httpx library (Python) is for making HTTP requests in tools. zod (TypeScript) handles input validation.

How Do You Build a Basic MCP Server?

An MCP server is a program that declares tools and handles requests from AI models over a standard transport (stdio or HTTP). Here's the simplest possible server — it has one tool that returns the current time. This is your "Hello World" for MCP, and it actually works with Claude Desktop.

Python — Minimal MCP Server

from mcp.server import McpServer

from datetime import datetime

server = McpServer("my-first-server", "1.0.0")

@server.tool("get_current_time", description="Returns the current date and time")

async def get_current_time():

"""Get the current date and time in ISO format."""

now = datetime.now().strftime("%Y-%m-%d %H:%M:%S")

return f"Current time is: {now}"

if __name__ == "__main__":

server.run()

TypeScript — Minimal MCP Server

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

const server = new McpServer({

name: "my-first-server",

version: "1.0.0",

});

server.tool(

"get_current_time",

"Returns the current date and time",

{},

async () => {

const now = new Date().toISOString();

return {

content: [{ type: "text", text: `Current time is: ${now}` }],

};

}

);

const transport = new StdioServerTransport();

server.connect(transport);

That's a working MCP server. About 15 lines of code. The server declares one tool (get_current_time), and when an AI model calls it, the server returns the current timestamp. Now let's make it actually useful.

How Do You Add Custom Tools?

Adding custom tools means defining functions with clear names, descriptions, and typed parameters that AI models can discover and call. Let's build something practical — a task manager MCP server with 3 tools: add tasks, list tasks, and complete tasks. This is the kind of server AI assistants use in real workflows.

Python — Task Manager Server

from mcp.server import McpServer

from datetime import datetime

from uuid import uuid4

server = McpServer("task-manager", "1.0.0")

# In-memory task store

tasks: list[dict] = []

@server.tool(

"add_task",

description="Add a new task to the task list"

)

async def add_task(title: str, priority: str = "medium") -> str:

"""Add a task with a title and priority level (low, medium, high)."""

if priority not in ("low", "medium", "high"):

return f"Error: priority must be low, medium, or high. Got: {priority}"

task = {

"id": str(uuid4())[:8],

"title": title,

"priority": priority,

"completed": False,

"created_at": datetime.now().isoformat(),

}

tasks.append(task)

return f"Task added: [{task['id']}] {title} (priority: {priority})"

@server.tool(

"list_tasks",

description="List all tasks with their status"

)

async def list_tasks() -> str:

"""Show all tasks including completed and pending ones."""

if not tasks:

return "No tasks yet. Use add_task to create one."

lines = []

for t in tasks:

status = "✅" if t["completed"] else "⬜"

lines.append(

f"{status} [{t['id']}] {t['title']} "

f"(priority: {t['priority']})"

)

return "\n".join(lines)

@server.tool(

"complete_task",

description="Mark a task as completed by its ID"

)

async def complete_task(task_id: str) -> str:

"""Complete a task. Use list_tasks first to find the task ID."""

for t in tasks:

if t["id"] == task_id:

t["completed"] = True

return f"Task [{task_id}] '{t['title']}' marked as complete ✅"

return f"Error: No task found with ID {task_id}. Use list_tasks to see available tasks."

if __name__ == "__main__":

server.run()

TypeScript — Task Manager Server

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import { z } from "zod";

const server = new McpServer({

name: "task-manager",

version: "1.0.0",

});

interface Task {

id: string;

title: string;

priority: "low" | "medium" | "high";

completed: boolean;

createdAt: string;

}

const tasks: Task[] = [];

server.tool(

"add_task",

"Add a new task to the task list",

{

title: z.string().describe("The task title"),

priority: z.enum(["low", "medium", "high"])

.default("medium")

.describe("Task priority level"),

},

async ({ title, priority }) => {

const task: Task = {

id: Math.random().toString(36).slice(2, 10),

title,

priority,

completed: false,

createdAt: new Date().toISOString(),

};

tasks.push(task);

return {

content: [{

type: "text",

text: `Task added: [${task.id}] ${title} (priority: ${priority})`,

}],

};

}

);

server.tool(

"list_tasks",

"List all tasks with their status",

{},

async () => {

if (tasks.length === 0) {

return {

content: [{ type: "text", text: "No tasks yet. Use add_task to create one." }],

};

}

const lines = tasks.map((t) => {

const status = t.completed ? "✅" : "⬜";

return `${status} [${t.id}] ${t.title} (priority: ${t.priority})`;

});

return { content: [{ type: "text", text: lines.join("\n") }] };

}

);

server.tool(

"complete_task",

"Mark a task as completed by its ID",

{

task_id: z.string().describe("The ID of the task to complete"),

},

async ({ task_id }) => {

const task = tasks.find((t) => t.id === task_id);

if (!task) {

return {

content: [{

type: "text",

text: `Error: No task found with ID ${task_id}. Use list_tasks to see available tasks.`,

}],

};

}

task.completed = true;

return {

content: [{

type: "text",

text: `Task [${task_id}] '${task.title}' marked as complete ✅`,

}],

};

}

);

const transport = new StdioServerTransport();

server.connect(transport);

Now you have a server with 3 tools. The AI can add tasks, list them, and mark them complete — all through natural language. Ask Claude "Add a task called Review MCP tutorial with high priority" and watch it work.

How Do You Connect Your MCP Server to Claude?

Connecting an MCP server to Claude Desktop requires editing a JSON configuration file that tells Claude where your server is and how to run it. The process takes about 2 minutes. Once connected, Claude automatically discovers your tools and can use them in any conversation.

Follow these steps:

- Open Claude Desktop on your computer

- Go to Settings → Developer → MCP Servers

- Click "Edit Config" to open

claude_desktop_config.json - Add your server configuration:

For Python:

{

"mcpServers": {

"task-manager": {

"command": "python",

"args": ["/full/path/to/my-mcp-server/server.py"]

}

}

}

For TypeScript:

{

"mcpServers": {

"task-manager": {

"command": "node",

"args": ["/full/path/to/my-mcp-server/server.js"]

}

}

}

- Save the file and restart Claude Desktop

- Look for the hammer icon (🔨) in the chat input area

After restarting, you should see the hammer icon in the Claude chat input. Click it — you'll see your tools listed: add_task, list_tasks, and complete_task. Now try asking Claude:

"Add a task called Review MCP tutorial with high priority"

Claude will call your add_task tool and confirm it was added. Then ask "Show me all my tasks" — Claude calls list_tasks automatically.

Want to know where AI fits YOUR business?

Get a personalized AI Audit — 2-hour deep dive into your workflows + a written roadmap with ROI estimates. 70+ projects delivered.

How Do You Add Input Validation?

Input validation in MCP servers ensures that AI models send correctly formatted data before your tool logic runs. Without validation, a model might send a string where you expect a number, or skip a required field entirely. The MCP SDK supports Pydantic (Python) and Zod (TypeScript) for declarative schema validation.

Python — Pydantic Validation

from pydantic import Field

@server.tool(

"add_task",

description="Add a new task to the task list"

)

async def add_task(

title: str = Field(

description="The task title",

min_length=1,

max_length=200

),

priority: str = Field(

default="medium",

description="Priority: low, medium, or high",

pattern="^(low|medium|high)$"

),

) -> str:

task = {

"id": str(uuid4())[:8],

"title": title.strip(),

"priority": priority,

"completed": False,

"created_at": datetime.now().isoformat(),

}

tasks.append(task)

return f"Task added: [{task['id']}] {title} (priority: {priority})"

TypeScript — Zod Validation

server.tool(

"add_task",

"Add a new task to the task list",

{

title: z.string()

.min(1, "Title cannot be empty")

.max(200, "Title too long")

.describe("The task title"),

priority: z.enum(["low", "medium", "high"])

.default("medium")

.describe("Task priority level"),

},

async ({ title, priority }) => {

// ... tool logic here

}

);

Now if the AI (or a test script) sends invalid data — an empty title, a priority of "urgent" instead of "high" — your server returns a clear validation error instead of crashing or producing garbage output.

How Do You Add Error Handling?

Error handling in MCP servers means catching failures in your tool logic and returning structured error responses instead of crashing the entire server process. When an API call fails, a file doesn't exist, or a database query times out, the AI model needs a clear error message it can relay to the user — not a Python traceback.

Python Error Handling Pattern

@server.tool(

"search_documents",

description="Search company documents by keyword"

)

async def search_documents(query: str) -> str:

try:

results = await document_api.search(query)

if not results:

return f"No documents found matching '{query}'. Try different keywords."

return format_results(results)

except ConnectionError:

return "Error: Unable to connect to the document service. Please try again later."

except TimeoutError:

return "Error: Search timed out. Try a more specific query."

except Exception as e:

# Log the full error for debugging

logger.error(f"search_documents failed: {e}", exc_info=True)

# Return a safe message to the AI — never expose stack traces

return "Error: An unexpected error occurred while searching. Please try again."

TypeScript Error Handling Pattern

server.tool(

"search_documents",

"Search company documents by keyword",

{ query: z.string().min(1).describe("Search keyword") },

async ({ query }) => {

try {

const results = await documentApi.search(query);

if (results.length === 0) {

return {

content: [{

type: "text",

text: `No documents found matching '${query}'. Try different keywords.`,

}],

};

}

return { content: [{ type: "text", text: formatResults(results) }] };

} catch (error) {

console.error("search_documents failed:", error);

return {

content: [{

type: "text",

text: "Error: Unable to search documents right now. Please try again later.",

}],

isError: true,

};

}

}

);

Key rules for MCP error handling:

- Never expose stack traces — the AI might repeat them to users

- Return actionable messages — "Try a more specific query" is better than "500 Internal Server Error"

- Use

isError: true(TypeScript) to signal errors to the AI model - Log full errors server-side for your own debugging

How Do You Test Your MCP Server?

Testing MCP servers involves three layers: the MCP Inspector CLI for interactive debugging, Claude Desktop for real-world AI integration testing, and automated unit tests for CI/CD pipelines. Start with the Inspector — it shows you exactly what the AI model sees when it discovers your tools.

1. MCP Inspector (Interactive Testing)

The MCP CLI includes a built-in inspector that lets you call tools manually:

# Python

mcp inspect server.py

# TypeScript

npx @modelcontextprotocol/inspector node server.js

This opens a web UI where you can:

- See all registered tools with their descriptions

- Call each tool with custom inputs

- View the raw JSON-RPC messages

- Debug parameter validation issues

2. Claude Desktop (Integration Testing)

Once connected (see section above), test with natural language:

- "Add a task called Test MCP server with high priority"

- "Show me all tasks"

- "Complete the first task"

Watch how Claude interprets your tool descriptions and sends parameters.

3. Unit Tests (Automated Testing)

import pytest

from server import add_task, list_tasks, complete_task, tasks

@pytest.fixture(autouse=True)

def clear_tasks():

tasks.clear()

yield

@pytest.mark.asyncio

async def test_add_task():

result = await add_task("Write docs", "high")

assert "Task added" in result

assert len(tasks) == 1

assert tasks[0]["priority"] == "high"

@pytest.mark.asyncio

async def test_complete_task():

await add_task("Test task", "medium")

task_id = tasks[0]["id"]

result = await complete_task(task_id)

assert "complete" in result.lower()

assert tasks[0]["completed"] is True

@pytest.mark.asyncio

async def test_complete_nonexistent_task():

result = await complete_task("fake-id")

assert "Error" in result

Run tests with pytest -v and integrate into your CI pipeline.

How Do You Deploy an MCP Server to Production?

For personal use with Claude Desktop or Cursor, your MCP server runs locally on your machine — no deployment needed. For shared or always-on access, deploy as a remote HTTP server using Docker on any cloud platform (AWS, GCP, Railway, Fly.io). The MCP SDK supports both stdio (local) and HTTP+SSE (remote) transports.

Local (stdio) — Personal Use

This is what we've been building. Your server communicates via stdin/stdout, and Claude Desktop manages the process. Perfect for:

- Personal productivity tools

- Development and testing

- Cursor / Windsurf integration

Remote (HTTP + SSE) — Shared Access

For team use or production, switch to the HTTP transport:

from mcp.server import McpServer

from mcp.server.sse import SseServerTransport

from starlette.applications import Starlette

from starlette.routing import Route

server = McpServer("task-manager", "1.0.0")

# ... register tools ...

sse = SseServerTransport("/messages")

async def handle_sse(request):

async with sse.connect_sse(

request.scope, request.receive, request._send

) as streams:

await server.run(

streams[0], streams[1], server.create_initialization_options()

)

app = Starlette(routes=[

Route("/sse", endpoint=handle_sse),

Route("/messages", endpoint=sse.handle_post_message, methods=["POST"]),

])

Docker Deployment

FROM python:3.11-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

EXPOSE 8000

CMD ["uvicorn", "server:app", "--host", "0.0.0.0", "--port", "8000"]

docker build -t my-mcp-server .

docker run -p 8000:8000 my-mcp-server

For most use cases, local is enough to start. Deploy to the cloud when you need multiple users or always-on access. If you need help with production deployment, check our MCP server development services.

What Are Common Mistakes When Building MCP Servers?

The most common mistake is not validating tool inputs — AI models sometimes send unexpected data types or missing fields, which crashes unprotected servers. The second most common issue is having too many tools, which confuses the AI model about which tool to pick. Keep your server focused with fewer than 15 tools.

Here are the 7 mistakes we see most often (after building 70+ AI products):

-

Not validating input — The AI might send

priority: "urgent"instead of"high". Always use Pydantic/Zod schemas. -

No error handling — One failed API call shouldn't kill the entire server. Wrap every tool in try/catch.

-

Exposing secrets in tool responses — If your tool returns raw API responses containing tokens or keys, the AI might repeat them to the user. Sanitize all output.

-

Too many tools — AI models get confused with 50+ tools. Keep it under 15 tools per server. Split into multiple servers if needed.

-

No rate limiting — One enthusiastic user (or one enthusiastic AI) can burn through your entire API quota in minutes. Add rate limits to tools that call external APIs.

-

Blocking the event loop — MCP tools are async. A synchronous

time.sleep(30)or a blocking HTTP call freezes the entire server. Always useasyncio/await. -

Not testing with real AI models — Unit tests pass, but when Claude actually uses your tool, the description is confusing and it picks the wrong tool. Always test with a real AI model before shipping.

What's Next After Your First MCP Server?

After building your first MCP server, the natural next steps are adding authentication (OAuth 2.0), connecting to real databases instead of in-memory storage, and publishing your server to MCP directories so others can discover it. The jump from tutorial to production typically takes 1-2 weeks of focused work.

Here's your roadmap:

-

Add OAuth 2.0 authentication — Required for any server handling sensitive data. The MCP SDK has built-in auth support.

-

Connect to real databases — Replace in-memory storage with PostgreSQL or another database. Your tools become persistent and useful across sessions.

-

Add Resources — Beyond tools, MCP supports read-only resources. Expose files, database records, or API data that the AI can browse without calling a function.

-

Build multi-tool workflows — Create tools that work together. Example:

search_customers→get_customer_details→create_support_ticket. -

Publish to MCP directories — Share your server with the community. List it on MCP directories so other developers can use it.

-

Go to production — Add monitoring, logging, rate limiting, and deploy to the cloud.

Want us to build a production MCP server for your product? We've shipped 70+ AI products and specialize in MCP. Check our MCP development services →

Frequently Asked Questions

Is MCP free to use?

Yes. MCP is an open-source protocol. The Python and TypeScript SDKs are free and MIT-licensed. You only pay for hosting if you deploy your server to a cloud provider. Local use with Claude Desktop is completely free.

Which AI models support MCP?

As of March 2026, MCP is supported by Claude (Anthropic), ChatGPT (OpenAI), Gemini (Google), Cursor, Windsurf, Cline, and many other AI tools. The list grows every month as MCP becomes the industry standard for AI-tool connectivity.

Can I use MCP with my existing API?

Yes. An MCP server is a thin wrapper around your existing API. You don't need to change your current architecture at all. Your MCP server calls your API internally and exposes the results as tools that AI models can use.

How is MCP different from ChatGPT plugins?

MCP is an open standard that works across every AI platform. ChatGPT plugins only worked with ChatGPT and were limited in scope. MCP supports tools, resources, and prompts — giving AI models much richer access to your systems. MCP is the industry standard going forward.

Do I need to know AI/ML to build an MCP server?

No. MCP servers are regular Python or TypeScript programs that define tools. The AI model handles all the intelligence — understanding user intent, choosing the right tool, formatting the response. Your server just provides the functions. If you can write a REST API, you can build an MCP server.

Need a Production-Ready MCP Server?

We build custom MCP servers that connect your product to Claude, ChatGPT, and the entire AI ecosystem. Our team has shipped 70+ AI products, including production MCP servers for enterprise clients.

What you get:

- Custom MCP server built to your spec

- Authentication, rate limiting, monitoring

- Deployed and production-ready in 2-4 weeks

- Full documentation and handoff